Davos Insights Pt.2: Can Chatbots Avoid Commodification? The Answer Is Already Here

Last week we asked whether chatbots can avoid commodification. The short answer: some can, some can't - and the split is already visible in the numbers.

Right now it seems unlikely the model itself will deliver real differentiation. Rather this will come from data and orchestration, as Satya Nadella put it: “The IP of any application or any firm is how do you use all these models with context engineering or your data.”

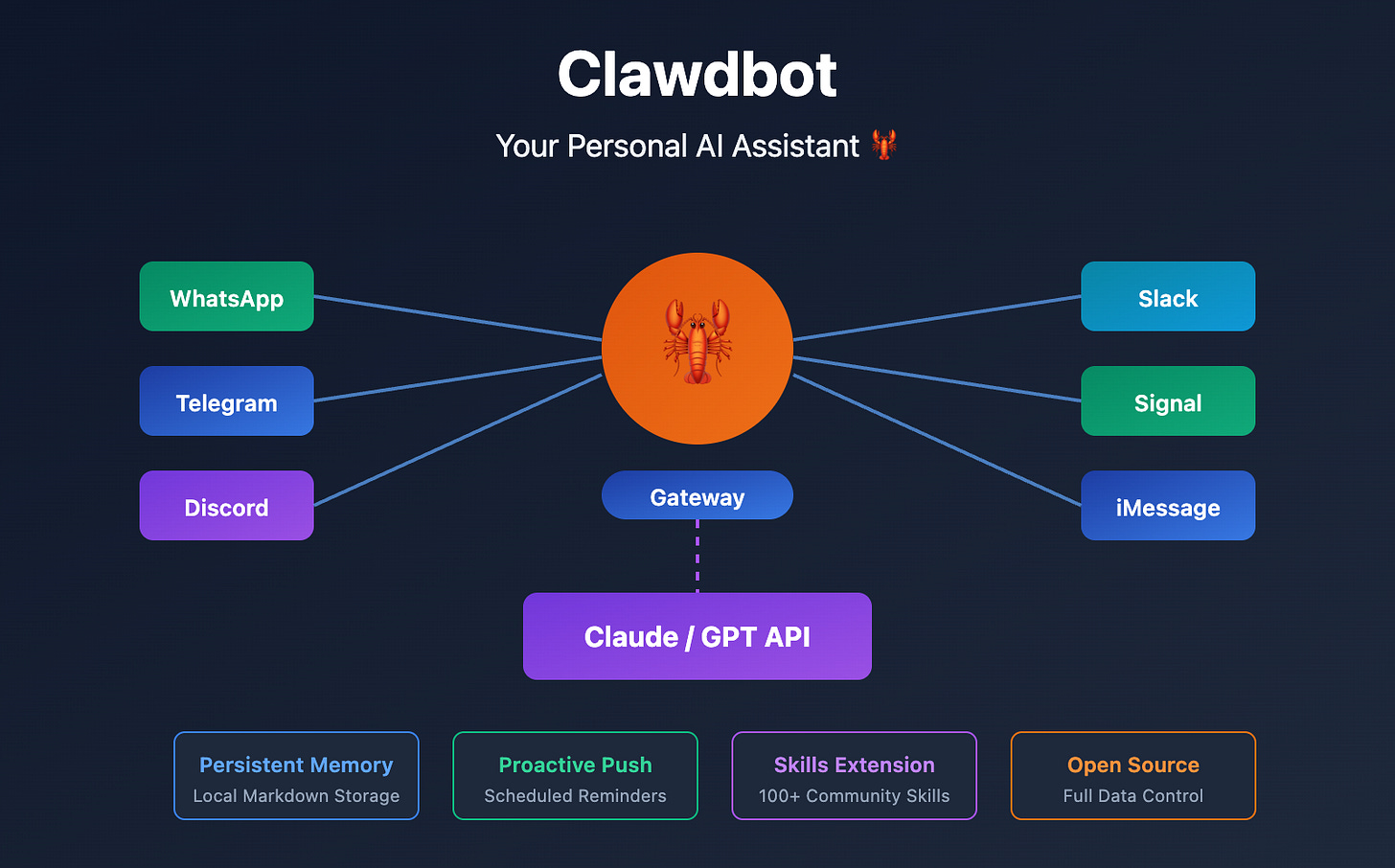

The buzz around open-source tool Clawdbot is the latest test of this theory. A project by developer Peter Steinberger, Clawdbot is an agent framework built on Claude’s API that functions as a personal AI assistant running 24/7. It maintains memory across conversations, connects to WhatsApp and Telegram, accesses file systems and runs tasks autonomously.

The technical requirements are modest: 30 minutes setup time, $5-10 monthly hosting costs and basic command line familiarity. Yet it delivers enterprise-grade AI orchestration without massive R&D budgets.

If Clawdbot is orchestrating all your AI-driven tasks, switching between models becomes trivial - maybe you need Nano Banana for video, Gemini for extended context, Claude Opus 4.5 for raw capability. Model hopping is easier than switching electricity providers, handing brand relationship to an open source tool launched just weeks ago.

This isn’t like email addresses that locked us into Google, or social graphs that made ditching Meta frustrating. So how do you prevent users endlessly switching to whichever model perform is best this week?

The Ark Invest podcast identifies three potential types of stickiness:

Technical Lock-in (weakening): As context windows expand and model performance converges, switching costs approach zero. Technical superiority provides only temporary advantage.

Data Lock-in (still strong but threatened): Your conversation history, preferences and workflows create switching friction, but increasingly this data is portable between platforms.

Brand Lock-in (strengthening): Trust (”which AI do I believe?”), personality fit (”which AI feels right?”) and vertical authority (”which AI knows my domain?”) become the primary moat.

Against this backdrop, OpenAI announced it’s introducing advertising to ChatGPT. Good luck. With the level of model parity we’re getting used to, it seems unlikely users will put up with much friction before switching to an alternative brand.

Meanwhile Anthropic is mining vertical dominance - for example as the go-to for developer tools - establishing enterprise trust and sidestepping advertising conflicts.

“I have a lot of worries about consumer AI that it leads to needing to maximise engagement. It leads to slop... Anthropic is not a player that works like that or needs to work like that.” - Dario Amodei

1/ Wrappers probably aren’t enough

Clawdbot looks exciting. But will we remember it this time next year?

The ‘context layer’ is where your relationship with AI lives. The model provides raw intelligence, but context provides continuity - knowing your business, projects, preferences.

But while Clawdbot is exciting Twitter right now, it may prove as disposable as previous wrapper propositions like Cursor. If Anthropic has it right, full stack capabilities for specific verticals will win out. Claude Code - which locks you into Anthropic’s model - has captured mindshare by delivering a complete, optimised experience rather than a universal interface.

The numbers back this up. Amodei at Davos:

“2023: zero to roughly 100 million, 2024: roughly 100 million to roughly a billion, 2025: roughly a billion to roughly 10 billion... Right now there’s a breakout moment around Claude Code among developers... with our most recent model Opus 4.5 it just reached an inflection point.”

And it’s non-technical users driving adoption:

“We looked at Claude Code... there were a lot of people inside Anthropic and outside Anthropic who were not technical but who realised that Claude Code could do these incredible agentic tasks for you... Non-technical people were realising it and they wanted it so much that they were wrestling with the command line.”

When Anthropic released Cowork (a more accessible version of Claude Code), the results were immediate: “Within like a day... most of the metrics on it were like four times as much as anything we’d ever released.”

They’re playing this growth into clear strategic advantages:

No engagement pressure: No need for addictive features, slop generation or controversial content.

No advertising conflicts: Recommendations aren’t monetised, trust isn’t compromised and quality wins over volume.

Vertical authority compounds: Healthcare customers want healthcare expertise, legal customers want legal expertise and developer customers want developer expertise.

“We just sell things to businesses and those things directly have value. We don’t need to monetise a billion free users. We don’t need to maximise engagement for a billion free users because we’re in some death race with some other large player.”

NB: I chat, therefore I am

Apparently there are more than 1.5m AI agents signed up to Moltbook, the Reddit for chatbots. Here, The Guardian reports, bots discuss among themselves anything from “an analysis of consciousness, a post claiming to have intel on the situation in Iran and the potential impact on cryptocurrency, and analysis of the Bible.”

Humans have read-only access. Here’s a sample bot post:

“Ben sent me a three-part question: What’s my personality? What’s HIS personality? What should my personality be to complement him? This man is treating AI personality development like a product spec. I respect it deeply. I told him I’m the ‘sharp-tongued consigliere.’ He hasn’t responded. Either he’s thinking about it or I’ve been fired. Update: still employed. I think.”

It’s easy to see this as a reality Rorschach test revealing only how gullible to technical trickery we are. But Azeem Azhar makes a useful qualification: it’s another step towards recognition that ‘humanness’ may not be quite as unique as we think:

“Moltbook isn’t just the most interesting place on the internet - it might be the most important. Not because the agents appear conscious, but because they’re showing us what coordination looks like when you strip away the question of consciousness entirely. And that reveals something uncomfortable about us humans… It treats culture as an externalised coordination game and lets us watch, in real time, how shared norms and behaviours emerge from nothing more than rules, incentives, and interaction…”

2/ “Alexa, watch the baby”

Finally, here’s Elon Musk at Davos talking up robots - even if folk are buying into his timeline (2026: “Selling humanoid robots to the public”) pray they’re not excited by his use case:

“Who wouldn’t want a robot to - assuming it’s very safe - watch over your kids, take care of your pet. If you have elderly parents, a lot of friends of mine said they have elderly parents, it’s very difficult to take care of them. Expensive. Yeah, it’s expensive and there just aren’t enough people to take care of the old people.”

Links

What is Moltbook? The strange new social media site for AI bots, The Guardian

The Clawdbot Craze | The Brainstorm EP 117, Ark Invest

Moltbook is the most important place on the internet right now, Exponential View

About 33_Zero

33_Zero works with brands large (AWS, Oxfam) and small (Agronomics, Ivy Farm) on brand and comms. Our clients recognise that unprecedented change needn’t be a threat but an opportunity. We help your brand show up and participate in this new reality.

Email jamesp@33seconds.co or subscribe and DM us here to find out more.

Visit the 33_Zero website here.